The structural tension between OpenAI and the United States Department of Defense (DoD) has shifted from a philosophical debate into a pragmatic labor crisis. Sam Altman’s public efforts to de-escalate friction with the Pentagon are not merely diplomatic maneuvers; they represent a defensive strategy to stabilize a volatile internal culture. As key engineering talent migrates toward Anthropic, OpenAI faces a fundamental contradiction: the requirement for massive capital to achieve Artificial General Intelligence (AGI) necessitates deep-state integration, while the "safety-first" culture of its original workforce views such alignment as a violation of the company’s founding charter.

The Mechanism of Internal Decoupling

The exodus of OpenAI personnel to Anthropic is driven by a divergence in Organizational Risk Tolerance. This can be modeled as a conflict between three competing vectors:

- The Commercial Expansion Vector: The drive for revenue through enterprise and government contracts to fund R&D.

- The Safety/Alignment Vector: The technical and ethical guardrails designed to prevent catastrophic outcomes.

- The Geopolitical Alignment Vector: The pressure to ensure U.S. hegemony in AI development, often requiring military collaboration.

When the Geopolitical Alignment Vector accelerates, it creates friction with the Safety/Alignment Vector. Employees who view AI as a global public good perceive DoD partnerships as "dual-use" risks—technologies developed for peace that are inherently weaponizable. Anthropic has positioned itself as the primary beneficiary of this friction by maintaining a more rigid distance from offensive military applications, thereby capturing "alignment-focused" human capital.

The Cost of Talent Attrition in LLM Development

In the current AI development cycle, talent is more scarce than compute. The loss of a senior researcher at OpenAI does not just represent a loss of man-hours; it represents a loss of institutional memory regarding the failure modes of specific model architectures.

- Knowledge Persistence Loss: Every time a core researcher leaves for a competitor, the "delta" between the two companies’ capabilities shrinks.

- Recruitment Risk Premium: As OpenAI becomes more closely associated with the Pentagon, it must pay a higher premium to attract top-tier talent who may have ideological objections to defense work.

- The Anthropic Feedback Loop: Anthropic’s ability to market itself as a "safety research lab" allows it to acquire high-value talent at a lower relative cost, as the mission acts as a non-monetary subsidy.

Altman’s attempt to bridge this gap focuses on "dual-use" terminology—the idea that AI can assist the Pentagon in cybersecurity and logistics without being integrated into kinetic kill chains. However, this distinction is often technically impossible to maintain once a model is deployed via API or on-premise infrastructure.

The Pentagon’s Requirement for Deterministic Output

The friction between Silicon Valley and the DoD is also rooted in a technical mismatch between LLM behavior and military requirements. The military operates on Deterministic Logic, whereas LLMs operate on Probabilistic Inference.

For OpenAI to successfully integrate with defense systems, it must solve the "Explainability Gap." The Pentagon requires a "Chain of Custody" for every decision made by an autonomous system. Current transformer architectures do not provide this. When Altman speaks of de-escalating tensions, he is likely addressing the Pentagon’s frustration with the "black box" nature of GPT-4, alongside the internal pushback from his own researchers.

OpenAI’s strategy involves two distinct shifts:

- The Modularization of Safety: Separating the "core" reasoning engine from the "application" layer, allowing the defense-specific applications to have different safety tuning than the public-facing products.

- The Governance Pivot: Appointing board members with deep ties to the national security establishment to provide a veneer of "official" oversight, which theoretically lowers the perceived risk for government stakeholders while alienating the "AI Safety" purists within the ranks.

Measuring the 'Anthropic Support' Sentiment

Internal sentiment within OpenAI is not a monolith, but a bimodal distribution. On one side are the Product Realists, who believe that scale requires government partnership. On the other are the Alignment Idealists, who view Anthropic’s "Constitutional AI" approach as the only valid path forward.

The support for Anthropic among OpenAI staff is a leading indicator of Mission Drift. When employees "voice support" for a competitor, they are signaling that the competitor’s "Cost-Benefit Function" for humanity more closely aligns with their personal professional goals. This creates a "Brain Drain" that functions as a tax on OpenAI’s innovation speed.

The Capital-Defense Nexus

OpenAI’s transition from a non-profit to a "capped-profit" entity, and its subsequent pursuit of multi-billion dollar funding rounds, has fundamentally changed its relationship with the state. Massive scale-up requires three things that only the state or state-adjacent entities can provide:

- Regulatory Moats: Shielding the incumbent from smaller, open-source competitors.

- Energy Infrastructure: Access to the massive power grids required for next-generation data centers.

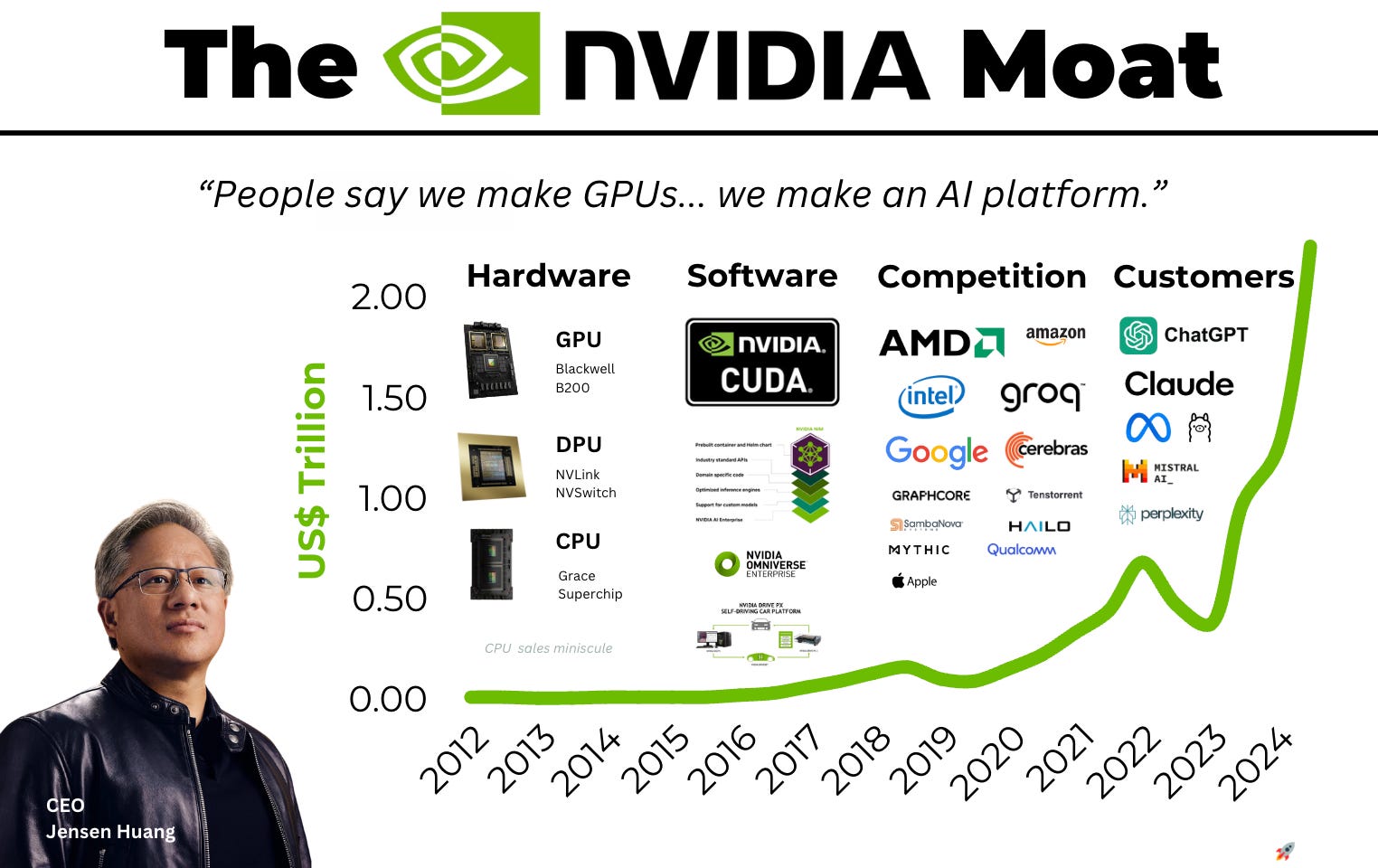

- Protectionist Policy: Ensuring that the compute supply chain (specifically H100/B200 chips) remains prioritized for their use cases.

The Pentagon is the ultimate arbiter of these resources. Therefore, Altman’s "de-escalation" is a mandatory component of his capital strategy. He cannot build a $7 trillion chip infrastructure or secure a sovereign energy supply without the blessing of the U.S. national security apparatus.

The strategic play for OpenAI is no longer about technical superiority alone; it is about managing a Three-Body Problem of talent retention, government alignment, and capital requirements. To stop the bleed to Anthropic, OpenAI must formalize a "Civilian-Military Divide" within its internal R&D.

This requires the creation of a "Skunk Works" division for defense—isolated from the core research teams—to prevent "mission contamination." By physically and operationally decoupling defense work from general AGI research, Altman may be able to satisfy the Pentagon's requirements while providing the "Safety" researchers with the ideological insulation they require to remain at the company. Failure to execute this decoupling will result in OpenAI becoming a de facto government contractor, while Anthropic absorbs the intellectual elite of the AI safety community.